Generated by Bing Image Creator: Photograph of spring fields with tiny flowers and blue sky and white clouds

This post is following of the above post. In this post, I will do linear regression using infer package workflow.

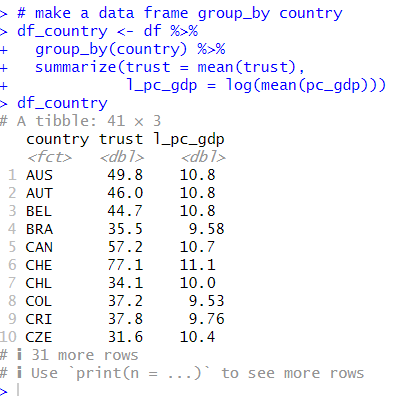

First, I make grouped by country data frame.

I use above data frame. Depedent variable is trust, independent variable is l_pc_gdp.

In my mind, I have following model.

trust = beta_0 + beta_1 * l_pc_gdp + u

beta_0 is intercept, beta_1 is slope and u is error term.

I would like to check beta_1 is not equal 0 and greater than 0.

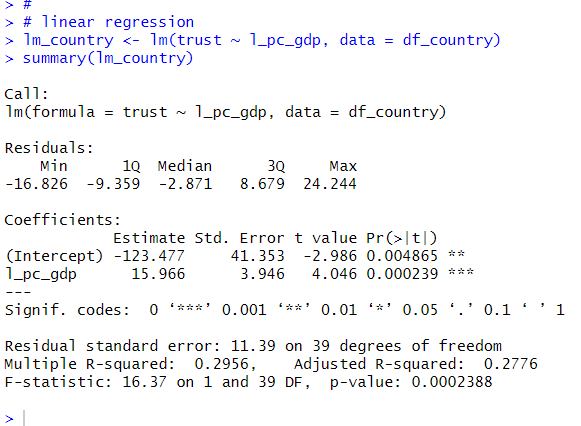

Usually, lm() function is the easiest way to check it like below.

Above result tells that trust = -123.477 + 15.966 * l_pc_gdp + u.

I will get similar result using infer package workflow.

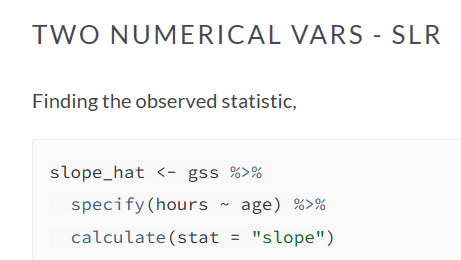

I refer to Full infer Pipeline Examples • infer .

Let's start.

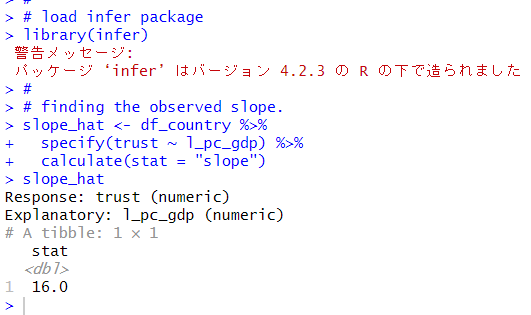

lm() functions gets 15.966, infer package workflow gets 16.0, the same result.

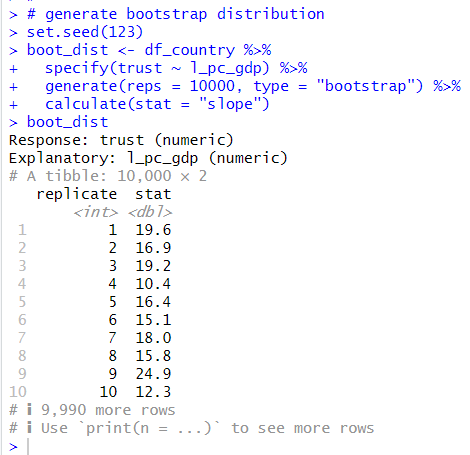

Next, I will make bootstrap distribution of slope estimate.

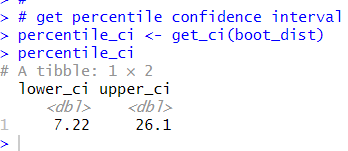

Then, get confidence interval using this distribution.

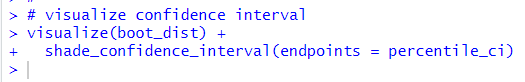

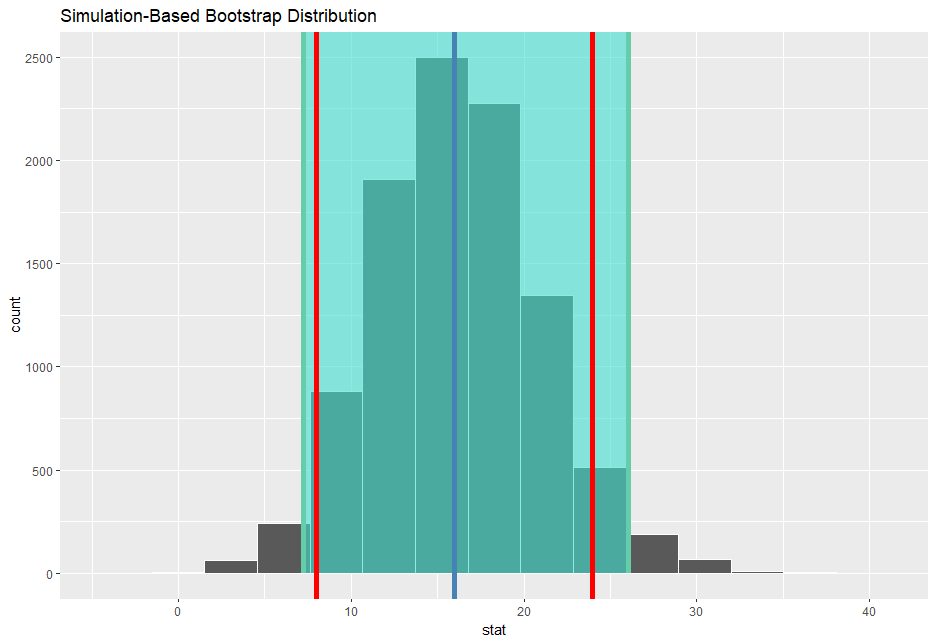

Then, visualize bootstrap distibution and confidence interval.

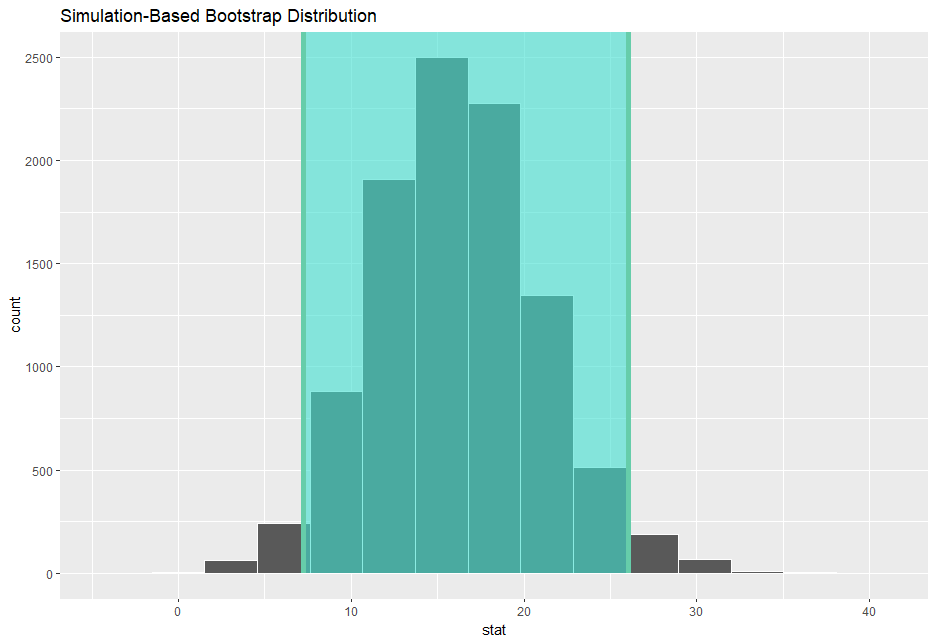

The confidence interval does not include 0, and I am 95% confident that the true slope is between 7.22 and 26.1.

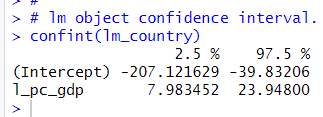

with lm object, I can get confidence interval with confint() function.

Let's add this value above distribution histogram.

red vertical lines are lm object confidence interval, in other words, theoretical(formula base) confidence interval.

You see theoretical confidence interval is narrower than simulation base(infer package workflow) confidence interval. It is because theoretical confidence interval are calculated on some assumptions such as error term is homoskedasticity variance, normally distibuted and so on.

Anyway, after above Simple Linear Regression analysis using 'infer' package workflow, I am 95% confudence, that when grouped by contry, l_pc_gdp sloe is between 7.22 and 26.1 for trut = beta_0 + beta_1 * l_pc_gdp + u model.

That's it. Thank you!

Next post is

To read from the 1st post,